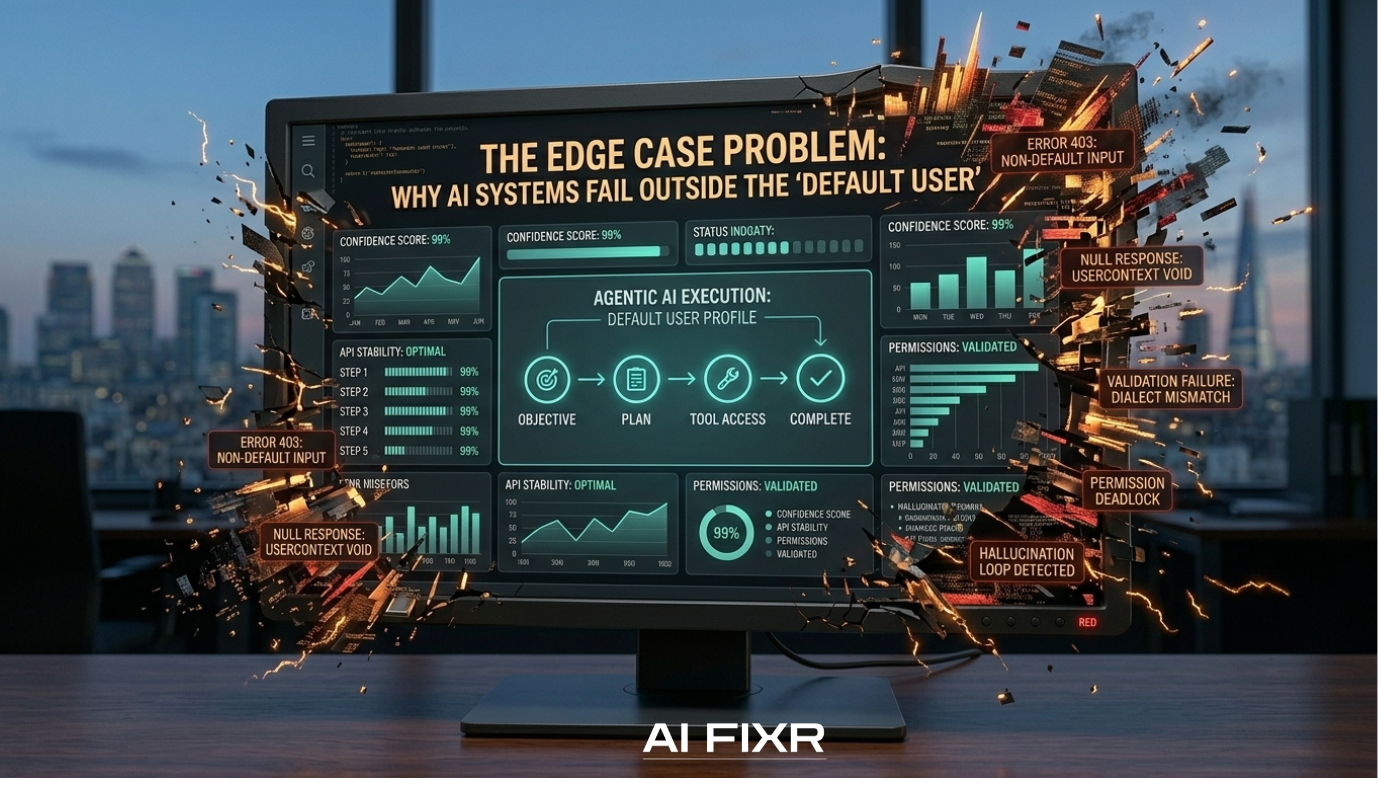

WHY AI FAILS: VOL 4 — The Edge Case Problem: Why AI Systems Fail Outside The “Default User”

In 2026, AI bias is no longer just a social conversation.

It is a system design failure.

Most enterprise AI systems don’t break loudly. They fail quietly, in the gaps between what was designed, and what the real world actually looks like.

And those gaps are almost always the same:

The system was built for a “default user” that does not exist.

THE “DEFAULT USER” ILLUSION

When organisations design AI systems, they often assume a clean, standardised world:

predictable customer behaviour

consistent data formats

uniform naming conventions

structured inputs from every user

But real enterprise environments don’t work like that.

Real users are:

multilingual

context-dependent

inconsistent in how they provide information

shaped by different cultural, economic, and behavioural patterns

When AI systems are not trained or tested against that reality, they don’t fail randomly.

They fail systematically at the edges.

WHERE AI ACTUALLY BREAKS

Most AI failures in production don’t happen in ideal conditions.

They happen at the edge cases:

identity verification systems misreading non-standard formats

customer models misclassifying unfamiliar behaviour patterns

automation workflows failing when inputs don’t match “expected structure”

decision systems optimised for one type of user group, not all users

This is not a model problem.

It is a representation problem in the system design layer.

THE SCAR TISSUE ADVANTAGE

In my 6 years working across enterprise AI systems, one pattern has become clear:

The people who consistently spot these failures early are not the ones who design for the “average.”

They are the ones who have spent their careers operating outside it.

That experience creates what I call scar tissue thinking:

You learn to recognise where systems assume too much.

You notice when “standard behaviour” isn’t actually standard.

You instinctively look for the edge case — because you’ve lived inside it.

And in AI systems, edge cases are where most production failures begin.

WHY THIS IS NOW A BUSINESS RISK

This is no longer just a design issue.

With AI systems now embedded into:

credit decisions

identity verification

customer service automation

operational decision-making

…blind spots become liability.

If a system consistently underperforms on certain user groups or behaviours, the impact is no longer theoretical — it becomes:

compliance exposure

financial bias risk

reputational damage

regulatory scrutiny

In 2026, AI systems are not judged by how well they perform on average.

They are judged by how they behave under real-world variation.

THE REAL FIX: DESIGN FOR VARIANCE, NOT AVERAGES

The organisations that will scale AI successfully are not the ones with the most advanced models.

They are the ones that design for:

variation, not uniformity

edge cases, not averages

real-world behaviour, not lab conditions

This requires more than better models.

It requires better system awareness:

diverse data inputs

broader testing environments

cross-context validation

continuous bias auditing at the system level

fixr FINAL THOUGHTS

AI systems do not fail because intelligence is missing.

They fail because reality is wider than the assumptions built into them.

The “default user” is a design shortcut, not a reflection of the real world.

And every shortcut in system design eventually shows up as a production failure.

The question is not whether your AI works in ideal conditions.

It’s whether it survives contact with reality.

At AI FIXR, we focus on that gap, the space between what systems assume, and what actually happens in production.

Because that is where AI either scales…

or quietly breaks.

If you are deploying AI into real customer environments and want to understand where your system may be failing at the edges, we run AI Fix Sessions to audit bias risk, system blind spots, and real-world failure points.

→ Book a session to stress-test your AI against reality, not assumptions.